The Future of System Design: Emerging Patterns

Master AI in 180 Days: From Zero to Job-Ready Portfolio Perfect for showcase your knowledge and strength.

Build, Learn, Lead: The Comprehensive 180-Day AI & Machine Learning Bootcamp Highlights the hands-on nature of the curriculum. Subscribe now.

When Yesterday’s Best Practices Become Tomorrow’s Technical Debt

Your production system works beautifully today. It handles 100,000 requests per second with 99.99% uptime. But here’s the uncomfortable truth: the architectural patterns you’re using were designed for a world that’s rapidly disappearing. Edge computing, AI workloads, WebAssembly runtimes, and eBPF-powered observability are fundamentally reshaping how we think about distributed systems. The question isn’t whether these patterns will replace current approaches—it’s how quickly you’ll need to adapt.

The Convergence: Five Patterns Redefining System Architecture

The future of system design isn’t about a single breakthrough—it’s about the convergence of five emerging patterns that work together to solve problems we couldn’t address before.

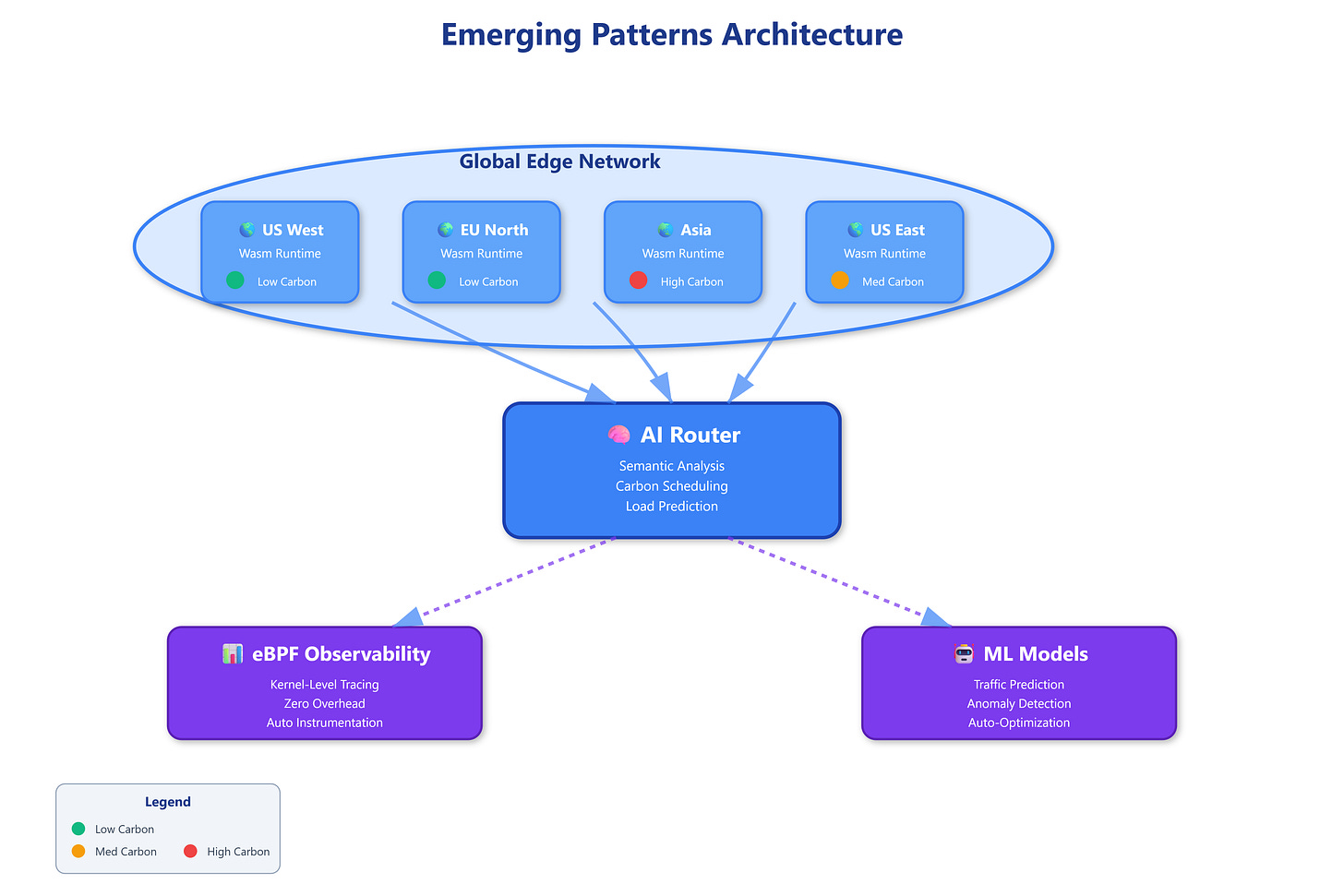

1. Edge-Native Architecture with Intelligent Placement

Traditional CDNs cache static content at the edge. The emerging pattern goes further: running full application logic at edge nodes with AI-powered workload placement. Instead of simple geographic routing, systems now use machine learning models to predict where requests will originate and pre-position compute resources accordingly.

The mechanism works through continuous traffic pattern analysis. When the system detects a spike in requests from Southeast Asia, it doesn’t just cache responses—it migrates entire service instances to edge nodes in that region. This happens automatically, with sub-second migration times using container orchestration that’s optimized for edge environments.

2. WebAssembly as the Universal Service Runtime

WebAssembly (Wasm) is moving beyond the browser to become the dominant runtime for microservices. Unlike containers that bundle entire operating systems, Wasm modules are 100x smaller and start in microseconds. More importantly, they’re truly language-agnostic—you can write services in Rust, Go, Python, or JavaScript and deploy them identically.

The real innovation is the security model. Wasm runs in a capability-based sandbox where services can only access resources you explicitly grant. No more worrying about container escape vulnerabilities or privilege escalation attacks. Each service gets exactly the permissions it needs, verified at compile time.

3. eBPF-Powered Automatic Instrumentation

Current observability requires instrumenting your code with logging libraries and metrics exporters. eBPF (extended Berkeley Packet Filter) eliminates this entirely by hooking into the Linux kernel itself. Every network packet, system call, and resource allocation is automatically captured without modifying your application.

The breakthrough is zero-overhead observability. eBPF programs run in kernel space with near-native performance. You get detailed traces of every request, including latency breakdowns for each service hop, without the 5-15% performance penalty of traditional instrumentation. The system sees everything happening at the kernel level, including failures that never reach your application code.

4. AI-Native Service Mesh with Semantic Routing

Traditional service meshes route based on simple rules: send 10% of traffic to the new version, route based on headers, implement circuit breakers. AI-native meshes understand the semantic meaning of requests. They parse request content in real-time, classify intent, and route to the optimal service instance based on predicted resource requirements.

For example, a request asking for “analyze Q4 revenue trends” gets routed to GPU-enabled nodes with access to the analytics database, while “update user preferences” goes to lightweight instances near the user’s location. The routing decisions happen in microseconds, using tiny ML models running on the mesh control plane.

5. Sustainability-Aware Scheduling

The emerging pattern treats carbon footprint as a first-class scheduling constraint. Systems now monitor real-time carbon intensity of different data centers (based on renewable energy availability) and shift workloads dynamically. Batch jobs run when solar power peaks. Training jobs migrate to regions with hydroelectric power.

This isn’t just environmental—it’s economic. Cloud providers are starting to offer carbon-aware pricing, where you pay 30-40% less to run workloads on renewable energy. The scheduler optimizes for both latency and carbon cost, making intelligent trade-offs based on workload priorities.